The Prime Minister has been upbraided by the Office for Statistical Regulation (part of the UK Statistics Authority) for his assertion that child poverty is falling, when on all the tests used by the government the opposite is true. I’m not greatly enamoured of those tests. I’ve considered the case for the standard test of ‘relative’ poverty, 60% of the median income, in other work – it’s not bad, but we need to accept that it’s a pointer, not an authoritative measure. The claim that the figures for 2010/11 represent a test of ‘absolute poverty’ is particularly suspect. Having said that, however, there’s no real excuse for blustering that poverty has been getting better, when your own figures say that’s not so.

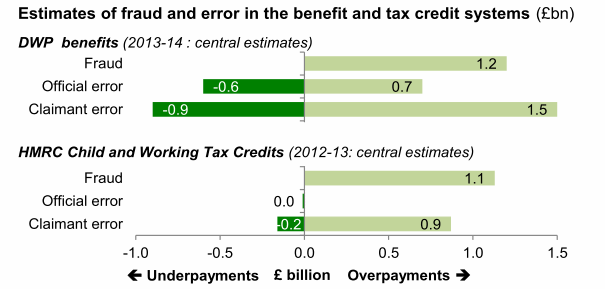

This is part of a wider problem, and one we’ve seen increasingly in the course of the last few years. The UK Statistics Authority was formed in the hope that it would be possible to maintain confidence in the integrity of official statistics. In the course of the last ten years, however, we’ve seen a growing contempt for statistical evidence, shown in the treatment of figures about crime, social security claims, incapacity, the management of coronavirus and more. It’s done whenever departments publish figures that are not official, when the press is steered to have a go at popular targets like migration or benefit fraud, and when ministers just make stuff up. There is a cost to undermining public trust: it’s not just that some figures can’t be believed, but that everything becomes open to doubt.